When Your Card Gets Stolen, a Factory Goes to Work

Your card details were stolen. A skimmer. A phishing page. Malware on a device you trusted.

The money is gone. But it cannot be spent yet.

Stolen funds are traceable. Move them directly and you leave a clear line back to the crime. So the fraudster does not move them alone. They build something first.

A network. A factory. A community of accounts whose only purpose is to make the money untraceable before it exits.

In a transaction network, a community is a group of accounts that transact significantly more with each other than with the outside world. Not friends. Not colleagues. A cluster of accounts behaving like a closed system.

In legitimate use, that does not happen. People send money to dozens of different counterparties — rent, groceries, family, friends. Their transaction history is varied, open, connected to the world.

A criminal community is the opposite. Its members transact intensively with each other and rarely with anyone else. The closure is the signal. And it is invisible to any system that looks at accounts one at a time.

Every member of a money mule community has a role.

Some are collectors. They receive funds from multiple sources — cash deposits, transfers, payments — and pass them into the network. Their accounts look active. Their transactions look ordinary. Their role is to aggregate.

Some are distributors. They receive consolidated funds and disperse them rapidly across many destinations. Each outgoing transfer is small. Each looks unremarkable. Their role is to scatter.

Some are brokers. They sit between other members, passing money back and forth, adding layers between the original deposit and its final destination. Their role is to obscure.

Cash enters dirty. It passes through collectors, flows through brokers, exits through distributors. By the time it leaves the community, it has touched enough accounts and accumulated enough transaction history that its origin is practically gone.

The money is clean.

The individuals recruited into money mule networks are rarely professional criminals. According to Europol, they are students looking for part-time income. People in financial distress. Newcomers responding to what looks like a legitimate job offer — "payment processing agent", "local transfer coordinator", "financial assistant".

The recruitment happens on social media, job platforms, messaging apps. The offer is simple: receive transfers into your account, forward them elsewhere, keep a small commission. The recruiter never mentions fraud. The mule often does not understand what they are part of — until it is too late.

This is deliberate. An unwitting participant makes a more convincing account holder. Their transaction behavior is natural because they do not know they are performing a role. Their profile is clean because, in many respects, it genuinely is.

This is why individual account analysis fails so completely against these networks. The account holder may be a victim. The account itself may have a legitimate history. What is criminal is not the person — it is the structure they have been placed inside.

Ask any standard compliance system about any single account in this network.

The collector? Ordinary cash deposits. Normal amounts. No thresholds crossed.

The broker? Peer-to-peer transfers between private individuals. Both directions. Looks like friends splitting expenses.

The distributor? Small outgoing transfers to multiple accounts. Nothing unusual for an active user.

No alerts. No flags. No review.

This is not a coincidence. The community is built this way deliberately. Each member carries just enough of the crime to be functional — and not enough to be detectable alone.

The crime is collective. The detection is individual. That gap is the entire business model.

Traditional transaction monitoring was built to answer one question: is this account suspicious?

Community detection asks a different question: do these accounts, together, form something that none of them could be alone?

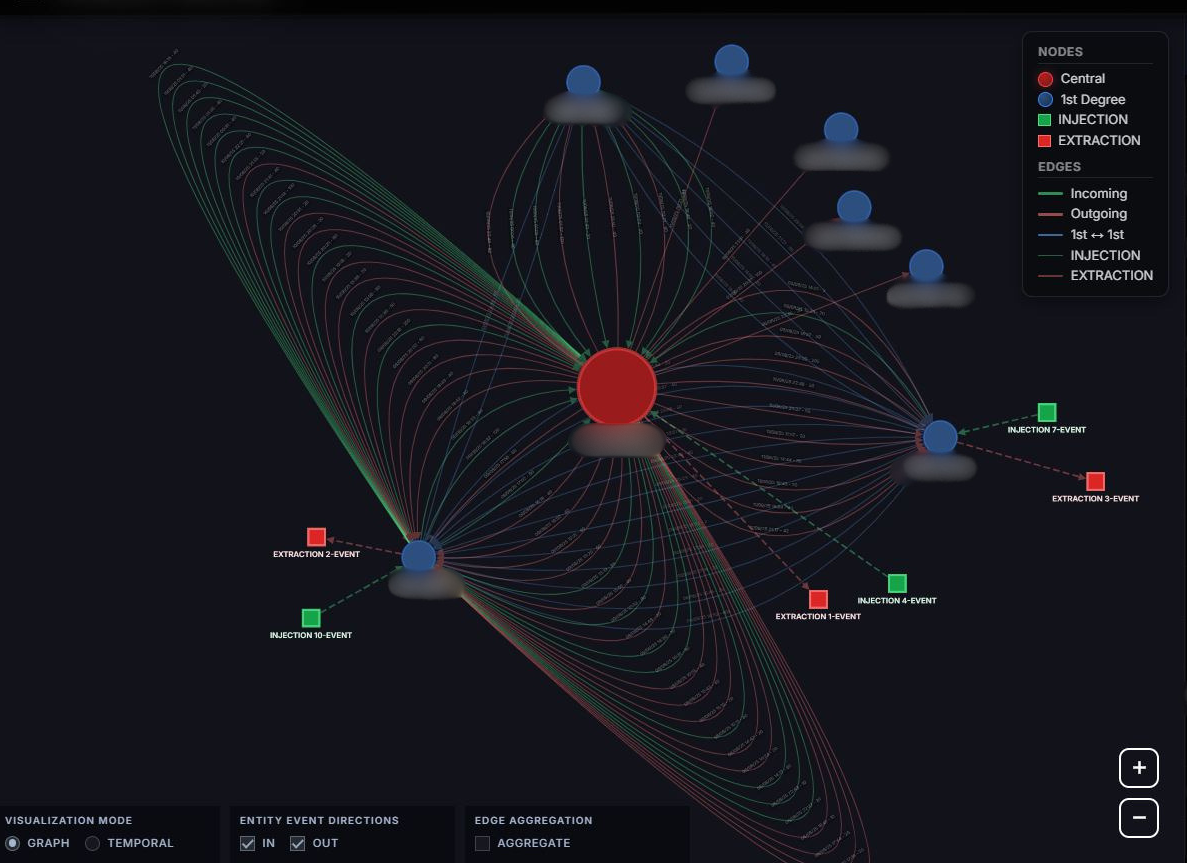

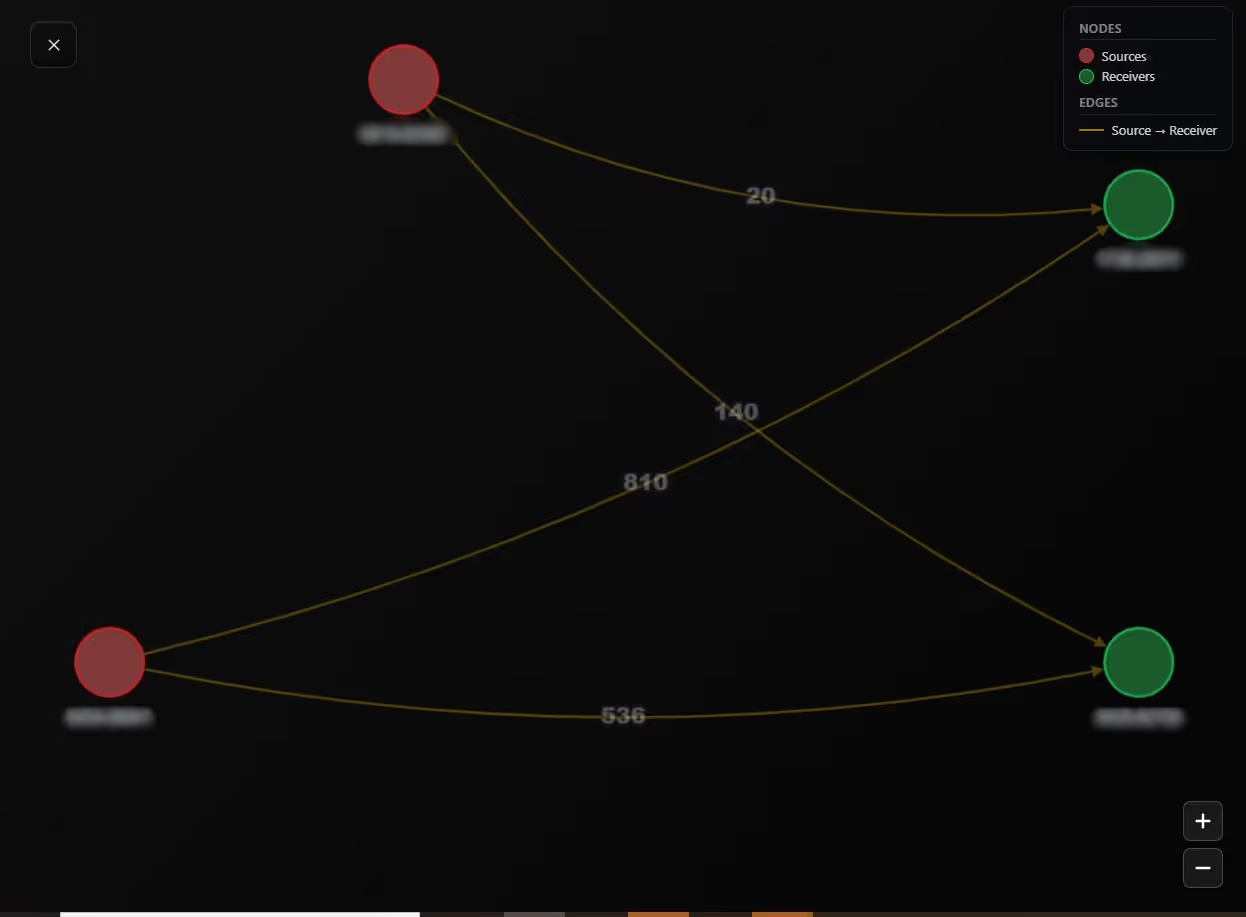

Four accounts. All connected to each other. All flagged as outliers. A compliance officer reviewing any one of them individually would close the file within minutes — nothing to see. But when you map the relationships between all four, the structure becomes immediately visible: a closed cluster of accounts whose internal transaction intensity has no legitimate explanation.

This is what community detection surfaces. Not individual suspicious transactions. The shape of a criminal network — who sends to whom, in what coordination, with what timing. Two accounts sending to the same two destinations simultaneously, repeatedly, over days. That is not two people who happen to share common contacts. That is coordination. That is a role being executed inside a structure.

Once a community is identified, each member is classified by its role — collector, distributor, broker. The combination of those roles, the density of internal flows relative to external ones, the synchronisation of activity across members: together, they produce a structural signature that no group of independent legitimate users would generate.

In the case documented here, every account in the community was flagged as an outlier. Not one would have been detected alone. Together, their structure revealed the network — the roles, the flows, the coordination that exposed the ring.

Two questions that every compliance system should be capable of answering:

First: Which groups of accounts in my network transact significantly more with each other than with the outside world — and does the internal structure of those groups reflect organised criminal coordination?

Second: Within those groups, what roles are the accounts playing — and does the combination of collectors, distributors, and brokers match the documented architecture of a money mule network?

These are not questions about transaction amounts. They are not questions about individual profiles. They are questions about what a group of accounts becomes when it operates with a shared criminal purpose.

Your card was stolen in seconds.The network ran for days.Every member passed every check.

The question is not whether communities like this operate in your network today.

The question is whether your system is built to see the network — or only the accounts inside it.

References:

© Thinsaction 2026 — No part of this article may be reproduced without attribution.